Wireless Network Experiment Methodology

Introduction

|

Wireless networks are often hard to replicate because the RF

environment can be highly dynamic and it is all but impossible to

ensure that the RF environment across the experiments is identical. It

is also difficult to directly compare the results across multiple

testbed facilities because the node topology, physical constraints,

and RF environment are almost always different on different

facilities. This inability to reproduce the results and compare

findings across the experiments is a serious impediment, which we

should overcome to bring scientific rigor to wireless

experimentation. In this project, we explore experimental techniques

to remove the environmental variations during protocol comparisons,

design a metric that captures the state of the network during a

wireless experiment, and compile a benchmark suite for network

protocols to standardize the protocol performance evaluations.

|

|

Concurrent Experiments

|

Researchers typically evaluate and compare protocols on the testbeds

by running them one at a time. This methodology ignores the variation

in link qualities and wireless environment across these

experiments. These variations can introduce significant noise in the

results. Evaluating two protocols concurrently, however, suffers from

inter-protocol interactions. These interactions can perturb

performance even under very light load, especially timing and timing

sensitive protocols. We argue that the benefits of running protocols

concurrently greatly outweigh the disadvantages. Although the wireless

environment is still uncontrolled, concurrent evaluations make

comparisons fair and more statistically sound. Through experiments on

two testbeds, we make the case for evaluating and comparing low

data-rate sensor network protocols by running them concurrently.

|

Capturing the Link and Network State During an Experiment

|

Text description of the experiment setup, which is the norm, is

usually not enough to reproduce the results of a wireless network

experiment. Such descriptions do not capture the properties of the

testbed that dictate the feasible network topology. Neither do they

capture the wireless dynamics present during the experiment. We design

a metric called Expected Network Delivery that succinctly captures the

state of the links and the network as they pertain to the performance

of a wirelss routing protocol. Such a quantitative metric that

describes the experiment environment allows us to compare the results

from wireless network experiment across time and across the testbeds.

|

|

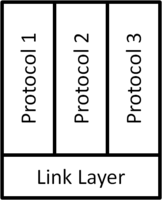

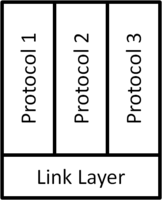

Benchmark for Wireless Network Protocols

|

Although a large number of networking protocols are available in

wireless and sensor networks, there is a lack of standardized

performance test to evalute these protocols. In this project, we

design a set of benchmark tests that will standardize the performance

evaluation for Collection and Dissemination protocols. Such a

benchmark suite should allow us to systematically compare the

performance of protocols of the same category and identify the best

protocols. Benchmark suites will also make it possible for evaluation

results from different research projects to be more meaningfully

compared. This is an ongoing work in collaboration with the TinyOS

Network Protocol Working Group.

|

|

Publications

| Daniele Puccinelli, Omprakash Gnawali, SunHee Yoon, Silvia

Santini, Ugo Maria Colesanti, Silvia Giordano, and Leonidas Guibas,

The Impact of Network Topology on

Collection Performance, To appear in proceedings of the 8th

European Conference on Wireless Sensor Networks (EWSN 2011), February

2011. Acceptance Rate - 20% |

|

| Omprakash Gnawali, Leonidas Guibas, and Philip

Levis, A Case for Evaluating Sensor

Network Protocols Concurrently, In Proceedings of the Fifth ACM

International Workshop on Wireless Network Testbeds, Experimental

evaluation and Characterization (WiNTECH 2010), September

2010. |

|